How the file system solves memory

Markdown file gardening already works.

Welcome to the 3rd issue of the newsletter. I'm still figuring out the best way to do this, so each issue looks and feels a bit different. This one is mainly about agent memory and the different ways people are trying to structure it.

I pulled together a few articles from the past two weeks that all landed on the same question: what should an agent remember, and where should that memory live?

The more I read about agent memory, the less magical it looks. The good ones are boring on purpose. Files, notes, plans, indexes, and retrieval that does not dump the whole attic into the prompt.

When I built a basic RAG system from scratch back in 2024 I quickly found out it does not solve everything about retrieval. Actually, quite the contrary. A lot more has to go into making it work well with LLMs. Since then I've used agents a lot and realized how good they are at finding information when you give them an "explore agent" style workflow.

Now, in early 2026, I keep seeing other people arrive at the same conclusion. These are a few examples.

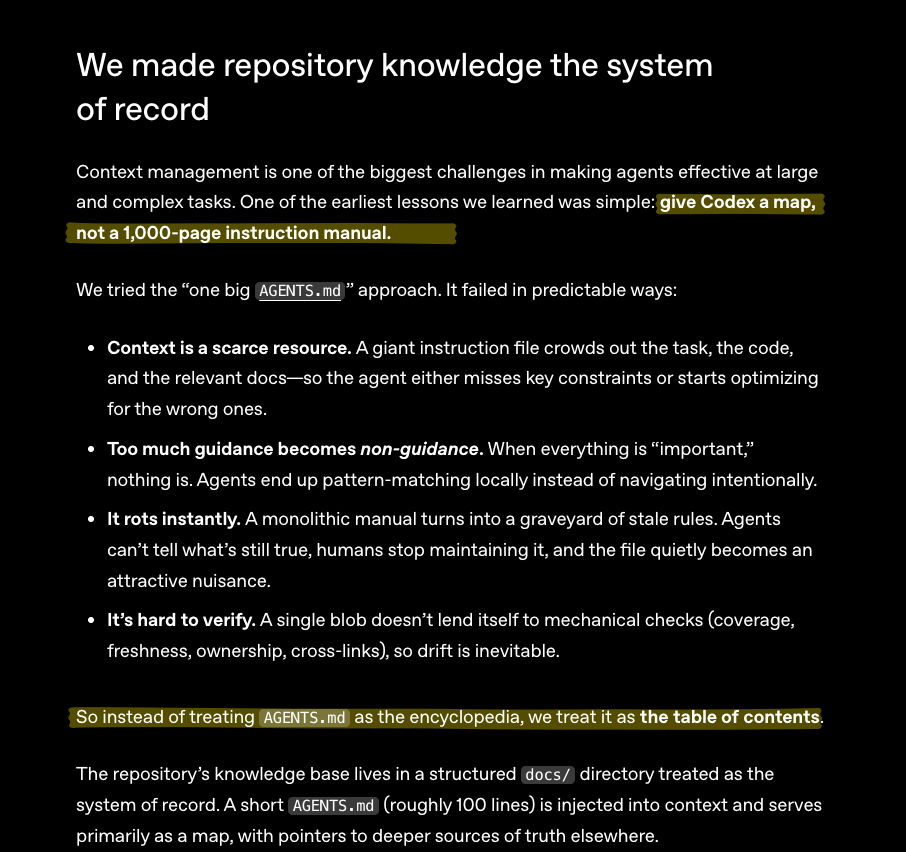

System of Records and Table of Contents

OpenAI's harness engineering write-up frames this as turning the repo into a system of record, and AGENTS.md shrinking to a table of contents.

System of records and Maps

Giuseppe Gurgone's look at Claude Code memory lands on a similar shape from the opposite end. Claude keeps a local MEMORY.md and injects it at session start, but the file has a hard cap. Once it grows, it has to become an index. Same idea again.

Is that it? Really?

After reading I can't avoid the thought of given how agents can be crazy powerful, it's incredible how every other new feature added to them feels hilariously basic underneath. Just unbelievable. Markdown files all the way down.

They surely can’t keep getting away with that

But these ideas also help to understand why Addy Osmani's warning about /init-generated AGENTS.md make sense. Auto-generated context files tend to repeat what the agent can already discover by listing files and reading docs. The useful memory is the weird stuff: tooling gotchas, local conventions, legacy traps, the things a model will not infer cleanly. We dove deeper into this on our Fragmented podcast last week.

This is all good and pretty sensible. Now let's walk into the mad scientist corner.

Obsidian fanatics are onto something

You might have stumbled across some of this on your timeline. Some people are deep in the weeds of things like Obsidian and knowledge base systems. Now that agents are naturally good with text, especially markdown files, there has been a lot of development in this part of my feed.

Me explaining my knowledge-base system after three coffees.

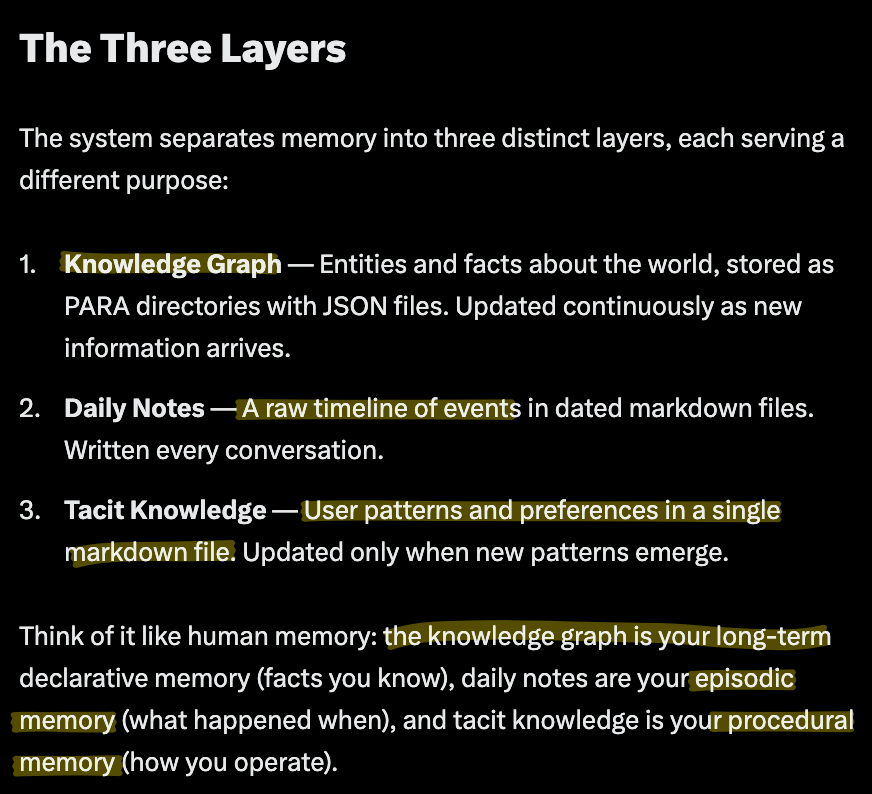

This OpenClaw + PARA + QMD thread breaks memory into layers: durable facts, dated notes, and tacit preferences. Search sits on top. That feels close to the real problem. A memory system has to store facts, preserve chronology, and capture how you tend to work.

Three layers for memory

The Skill Graphs post points in the same direction with markdown nodes, frontmatter, links, and maps of content. Agents are surprisingly good at walking text when the path is well marked.

Progressive disclosure with MOCs

That is what makes the file system as database experiments interesting, even when they drift into rabbit-hole territory. Models already know how to traverse a file tree. They can list directories, scan filenames, search for strings, read small chunks, and decide whether to go deeper. Keep the memory inspectable and you get a system both humans and agents can debug.

As you can see, these ideas overlap quite a bit with the table of contents approach we saw at the beginning.

When markdown is not enough

There is a separate memory layer that deserves more attention though: workflow memory.

Beads, and Ian Bull's write-up on it, are about tracking the work itself. What is blocked, what is next, what changed, what still needs a decision. That is a different problem from semantic recall, and for coding agents it may be the more urgent one. If every new session has to reconstruct task state from scratch, you're in an eternal groundhog day.

Yegge is explicit about positioning: “Beads isn’t a planning tool, a PRD generator, or Jira. It’s orchestration for what you’re working on today and this week.”

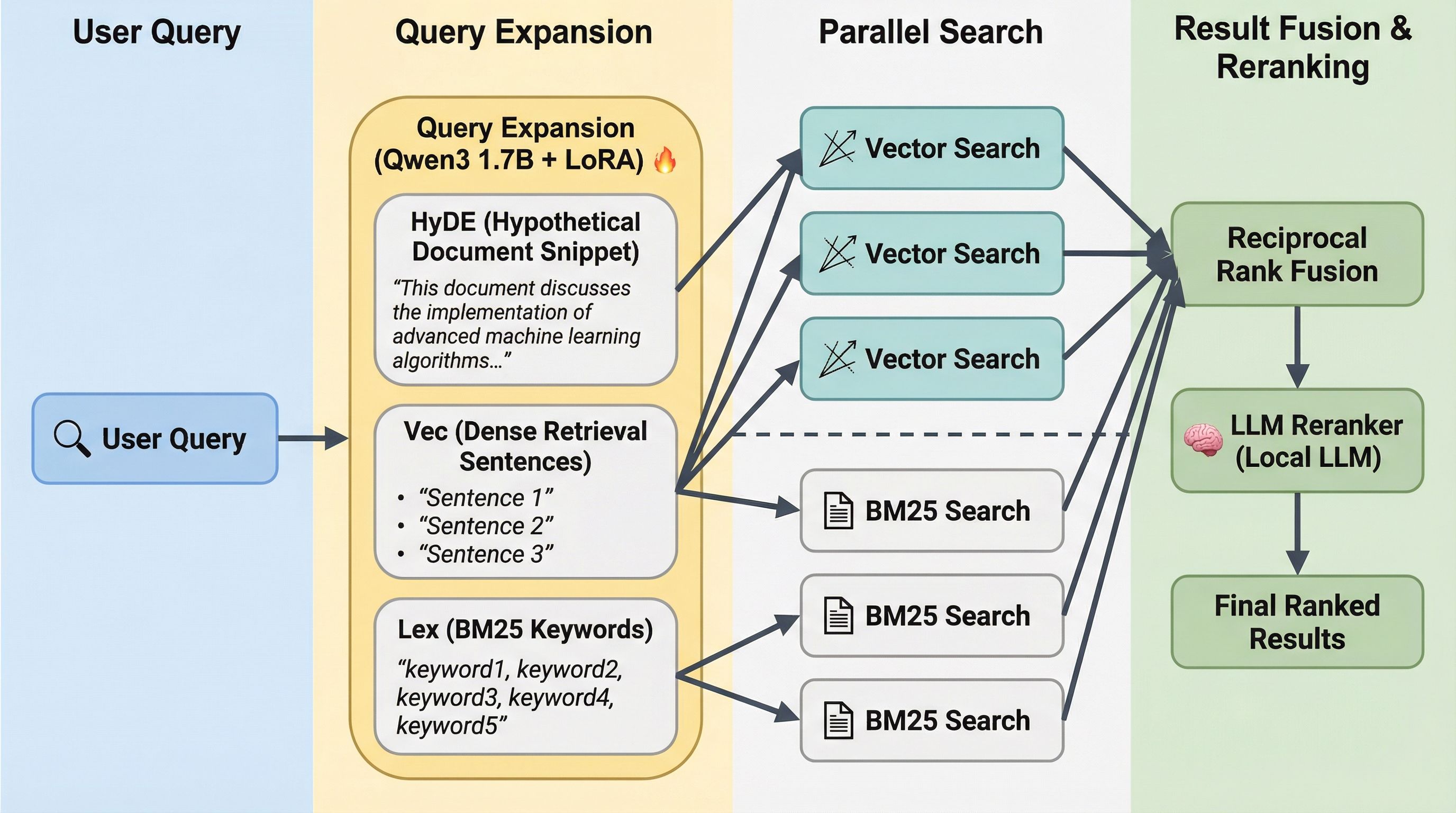

There's also QMD - Query Markup Documents, which is a hybrid retrieval backend.

Basically, a local indexing and retrieval tool. It indexes markdown files into a SQLite database. Instead of relying on a single search strategy, it runs two in parallel:

BM25 keyword search: fast, exact-match scoring over your memory documents. Great when you or the agent refer to a specific term, name, or command.

Vector (semantic) search — embeds queries and memory chunks into a shared vector space. Finds conceptually related information even when the wording is completely different.

The idea is that once enabled, QMD works transparently. You don't need to change how you talk to your agent. You just need to keep building the memory docs. I have yet to try this one. But does look interesting. Openclaw fans seem to like it.

QMD architecture

Some interesting ideas

I touched part of this a while back in Memory Portability: Owning what matters. My angle there was ownership. If a memory system starts working well, it becomes the hardest thing to switch away from.

The focus here is different, but I still like the ideas behind MCP Memory Service.

Hook-based capture means useful context gets saved without constant babysitting.

Pattern-based extraction keeps it fast, and session-start injection brings it back when a new run begins.

The dream-cycle consolidation is smart too, because a memory layer that only accumulates will eventually collapse under its own junk.

Local-first storage matters for the same reason portability matters. If the memory is yours, you can move it.

I should say that in Obsidian I'm not a die hard fan of any particular method (like PARA or whatever). I've ended up with a system that works for me, and recently I've been experimenting with a few things.

So some of the findings above are close to the setup I keep drifting towards: simple primitives, good links, and enough structure that the agent can scan first and go deep only when it needs to.

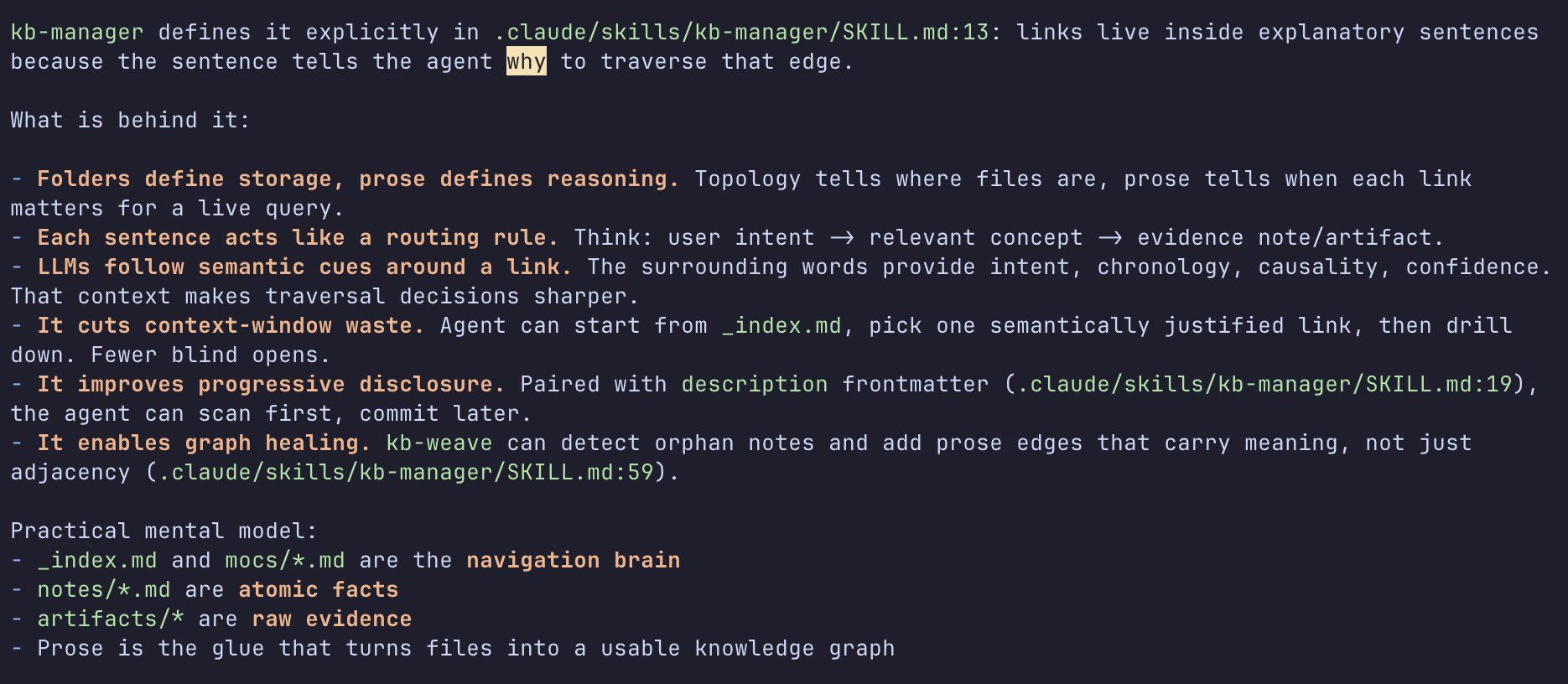

I asked the agent to explain my kb-manager skill current's state and this is what it replied:

My knowledge-base approach

So my read after this week's links is that "agent memory" is at least three different things: repo memory for rules and architecture, memory for facts and preferences, and workflow memory for task state and handoffs.

Different tools are solving different layers, which is why the space still feels messy. But the good ideas are starting to repeat.

Keep the entry point short.

Store the real knowledge outside the prompt.

Capture only what future work will actually need.

Let old memory decay or compress.

Keep the whole thing portable.

Maybe that is the clearest way to think about the whole category right now. We are building places agents can come back to and still find their way.

Elsewhere in the Latent Space 🌌

Semantic Ablation and why AI generated text sounds like a lobotomized corpo bot.

Linus’s law states that “given enough eyeballs, all bugs are shallow.” Anthropic: Got it! Introducing Claude Code Review

Peter Steinberger: I'm using AI to detect and block AI. The thing about llms atm is that they create new problems that require more LLMs to fix..

Main reason people are getting mac minis to run OpenClaw is to have iMessage access. Just Apple things.

Cursor goes to war. It turns out a VSCursor fork subsidizing SOTA model usage isn't a great business.